FEATURE6 March 2012

All MRS websites use cookies to help us improve our services. Any data collected is anonymised. If you continue using this site without accepting cookies you may experience some performance issues. Read about our cookies here.

FEATURE6 March 2012

It’s Super Tuesday in the US – so what better time to debate the role of opinion polls in elections and whether they influence voting intentions or simply record them. Read and vote.

Opinion polls shape public opinion as much as they reflect it. That’s a view often espoused by (typically unpopular) politicians worried that their low poll ratings will condemn them to certain defeat once an election rolls around. Pollsters, by and large, reject the charge – but behavioural economics shows us that there is some truth in it.

Pollsters are careful to avoid influencing the outcome of a poll through priming and order effects in the survey design, but there are many other stumbling blocks to bear in mind. Behavioural economics shows that in general people want to follow the crowd and not challenge the status quo. So if we see an opinion poll telling us what the majority thinks, believe or is doing then, in many circumstances, we will have a tendency to follow the herd.

Governments know this and are designing communications strategies which play to this heuristic. For example Her Majesty’s Revenue and Customs has sent letters to tax debtors informing them that the majority of people in their area have paid their taxes. The number of people who paid what they owed after they received the letter was about 15 percentage points higher than a control group which received a differently worded letter.

“Behavioural economics shows that people don’t want to challenge the status quo. So if we see a poll telling us what the majority thinks, we will have a tendency to follow the herd”

Meanwhile, a 1987 paper by Greenwald et al, which appeared in the Journal of Applied Psychology, demonstrated that you can increase voter behaviour by simply asking people if they expect to vote. This is what behavioural economists call the commitment bias: ask someone if they are going to do something and if they answer in the affirmative there is more chance that they will feel compelled to do what they said they would do than if they had not been asked at all. Tell a field interviewer that you are going to vote Conservative at the next election and you’re more likely to end up doing so.

But, worryingly, behavioural economics shows us that the answer a person gives to an opinion poll – and, by extension, the course of action that they might be committing themselves too – might not be particularly well thought through. This particular quirk of human behaviour is known as the availability heuristic, which works on the assumption that “if you can think of it, it must be important”. For example, people worry more about being involved in a plane crash than they ever fret about being in a car accident, because they have more vivid memories of plane crashes – they are more ‘available’ to them in their memory.

What this tells us is that people are cognitively lazy – especially when it comes to answering questionnaires – and in our busy world, they tend not to spend a lot of time thinking deeply about things. Ask someone who they’re likely to vote for at the next general election and the answer you get might well be the name of the party or politician they’ve been hearing about the most, or the one that most other people say they’ll vote for.

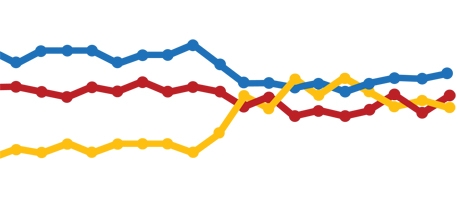

Every good theory needs evidence to support it. This one suggests that pre-election polls will underestimate the popularity of winning parties or popular candidates as their publication encourages more people to jump on the bandwagon. Unfortunately, the record of the polls in the UK back to 1983 shows the opposite: parties that appear popular do less well than the polls suggest, while those that appear to be less popular do better.

As recently as the 2010 election, polls suggested that the Liberal Democrats would make significant gains in their percentage share of the vote following the success of party leader Nick Clegg in the televised Prime Ministerial debates. Sticking with this theory, the Liberal Democrats should have done even better – but the record shows that all the polls overestimated support for the Liberal Democrats by an average of 3.4%.

Back in 1983 Labour wrote an election manifesto mocked as being “the longest suicide note in history”. Margaret Thatcher rode a wave of popularity based on victory in the Falkland Islands and positive economic indicators at home. But in that election the Conservatives did less well and Labour better than expected.

“The record of the polls in the UK dating back to 1983 shows that political parties that appear popular do less well than the polls suggest, while those that appear to be less popular do better”

Most famously, in 1992 the polls predicted the wrong result, that the Conservatives would suffer as a result of the poll tax, the unceremonious removal of Thatcher and a faltering economy. But John Major won a working majority, sparking a detailed search for the reasons for the polls’ failure. Many methodological changes followed, most of which are still employed today.

The theory that best explains this pattern has been around for decades. The ‘Spiral of Silence’ suggests that ordinary voters who see that the party they support is unpopular may become inclined to silence. When answering questions put to them in a poll, some may not want to admit their support for an unpopular party but instead claim they don’t know how they will vote or refuse to answer the question. Hence polls will tend to understate support for unpopular parties and overstate support for the more popular alternative – exactly

what the evidence suggests.

Following the 1992 disaster, pollsters started to make adjustments to poll figures based on indications of the presence of a spiral of silence, which from 1983 to 2005 mostly consisted of those who were a bit ashamed to admit they were Conservative voters, commonly known as “shy Tories”. Partly as a result of these adjustments, the accuracy of the polls in the 2005 election was the best ever.

If, instead of boosting support for unpopular options as indicated by the Spiral of Silence, pollsters had added percentage points to the popular options on the basis of the possible presence of a bandwagon, throughout this period they would have made their polls substantially less – not more – accurate.

3 Comments

Pete Shreeve

12 years ago

Interesting to see the disconnect between the voting in favour of Yes and the quality of the arguments put forward. Are research-live readers just jumping on the bandwagon of Behavioural Economics? Nick's was full of facts and figures and reminded us of the issues faced in the past and what was done to rectify. Crawford used examples from different categories or on different issues in support of his argument. The one I have a problem with is Crawford's assertion that "Behavioural economics shows that in general people want to follow the crowd and not challenge the status quo. So if we see an opinion poll telling us what the majority thinks, believe or is doing then, in many circumstances, we will have a tendency to follow the herd." Nick has provided several examples where this is clearly not the case or at least if it does occur it is amongst a small number of voters. I warrant that a staunch supporter is unlikely to be persuaded by an opinion poll. Behavioural economics has many merits and there are many other areas where it is relevant, as Crawford points out, but the old saying "horses for courses" springs to mind here. What next? NetPromoter is the only metric businesses need to focus on!

Like Reply Report

Liz Barker

12 years ago

A recent article in New Scientist highlighted the bandwagoning effect - of sex surveys. Professor Anne Johnson at UCL co-heads the National Survey of Sexual Attitudes and Lifestyle. She commented on the need for scientifically conducted surveys since "Knowing about how behaviour varies in populations has a normalising effect. It shows people what proportion of the population are like them." So the bandwagon effect will depend a lot on the context of the survey. [New Scientist, 12/11/11]

Like Reply Report

Dave Walker

12 years ago

The two arguments are not really in opposition. It is quite possible that polls both influence AND overstate majority opinion. Crawford has explained how or why it might happen, but offers no evidence that it does. Sparrow hasn't proved it does not happen, only that if it happens it does not necesarily determine the outcome.

Like Reply Report